Beyond the Dashboard: The Move to Agentic Operations

Key takeaways

- Physical AI needs an execution layer, not more dashboards

- Operational voice is the missing interface that drives real-time coordination

- We make radio and push-to-talk computable so workflows run across live systems

In 2026, the industrial AI conversation has shifted from chatbots and robots to agentic operations: systems built to execute, not summarize.

For teams running critical infrastructure, a barrier remains. Modern operations are instrumented across cameras, drone telemetry, sensors, and systems of record such as Computer-Aided Dispatch (CAD). These systems update continuously, but they cannot ingest the one input that actually drives execution in the physical world: human voice on radio and push-to-talk.

Voice is not audio. Voice is command.

VoiceBrain: The Execution Layer for Agentic Operations in the Physical World

Agentic operations in the physical world do not scale without an execution layer. Not because the models cannot reason, but because the operational stack cannot ingest intent, align systems, and drive coordinated action with accountability.

VoiceBrain is building the execution layer that makes Physical AI operational. We turn live operational voice from radios and push-to-talk into computable, time-aligned intelligence that connects field intent to the systems already deployed in high-stakes environments.

The Silo Problem: Real Life Does Not Happen in a Database

On the tarmac, on city streets, and in the field, mission-critical decisions are spoken, not typed. Yet voice remains unstructured and ephemeral. It does not reliably trigger the rest of the operational stack.

The result is a manual burden. Command and field teams become the integration layer, correlating intent across disconnected tools under pressure. VoiceBrain is already doing this in production by correlating operational voice to live systems across airports, public safety, and complex physical operations.

- Airport Operations: Teams must manually synchronize radio clearances with operational systems, creating delays between the tarmac and the terminal.

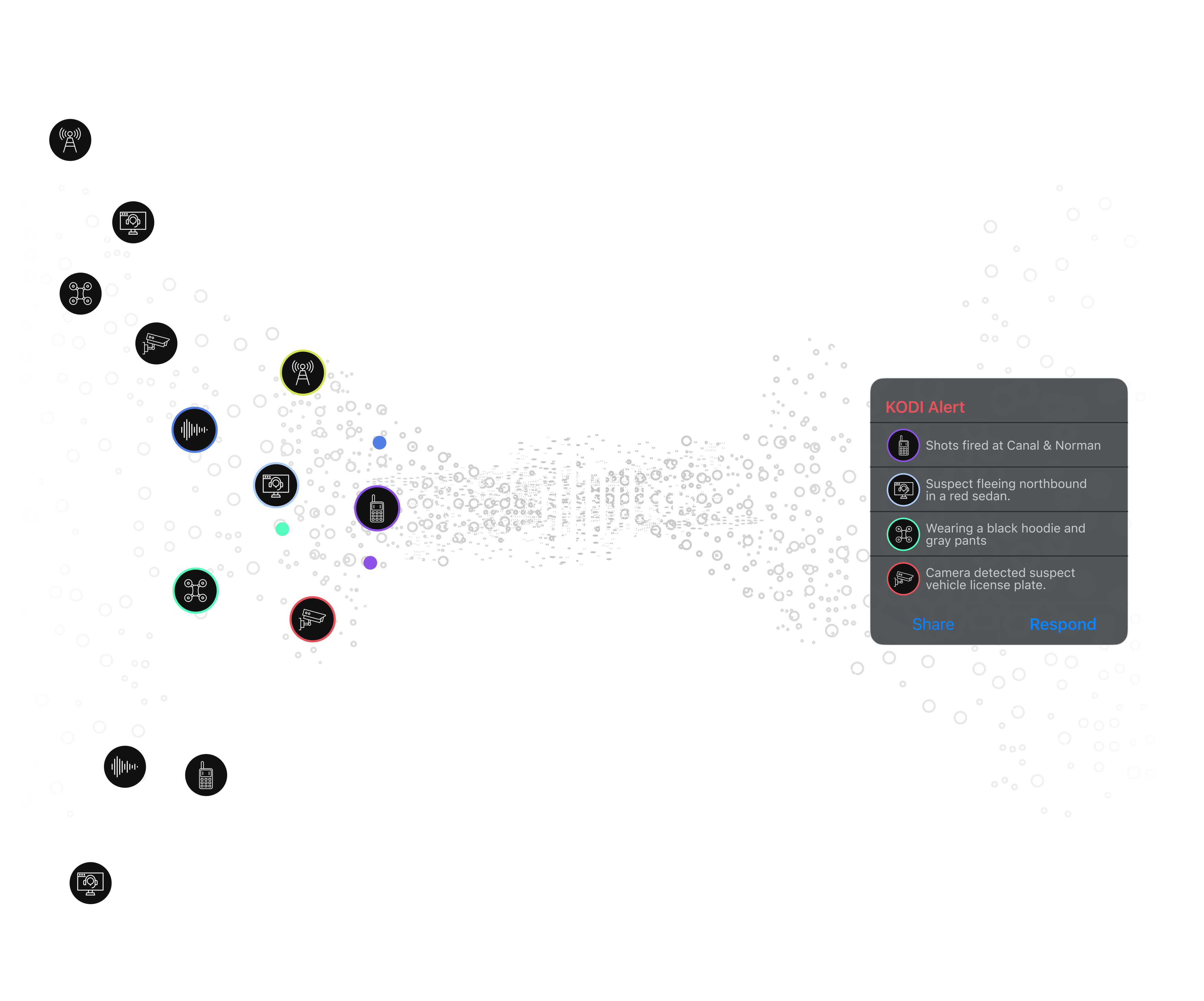

- Public Safety and RTCCs: Dispatchers become human routers, manually correlating voice intent with camera and sensor signals across multiple screens.

- Complex Physical Operations: Across logistics, manufacturing, and stadium and venue operations, coordination still runs on radio. Without voice as an input, systems update in parallel while execution stays manual.

The Agentic Voice Decisioning Layer: Execute, Search, Analyze

VoiceBrain unifies these fragments into a single, executable intelligence layer by converting live radio and push-to-talk communications into computable operational context.

- Drive Real-Time Action: Spoken intent drives workflows across live systems, reducing manual synchronization and enabling real-time coordination.

- Search the Unsearchable: Operational voice becomes a searchable data plane. Teams can query radio traffic at scale to reconstruct timelines, identify patterns, and surface investigative leads.

- Unify Accountability: Correlating voice with sensor and system data creates an audit-ready operational record of what was said, what happened, and what was executed.

Bottom Line: From Insight to Execution

Machine logs became searchable. Enterprise workflows became system-driven. Live operational voice is the last major unstructured data plane in critical operations.

Agentic operations will not be won by models alone. They will be won by infrastructure that connects intent to execution in real time.

Voice is where the mission begins. Now it becomes operational intelligence.

Further context

- RRE Ventures on Physical AI as an operating system for the real world.

- Andreessen Horowitz on the Physical AI deployment gap from frontier capability to production reality.

- POLYN on AI voice tech and the future of public safety communications.

Recent Posts

Voice intelligence changes the moment.